The AI Treadmill

New research shows AI doesn't give you your time back--it just raises the bar for what's expected

Note: This post is partially an experiment in using AI. What you will read below is part my writing and writing from Claude Opus 4.6. To make this post, I took a column that I submitted to the Columbia Business Times (for April Publication) and asked Claude to expand on the article to 1000-1200 words by providing more details (my word limit at the CBT is 650). Here is my original column and here is the prompt I used:

“Here is a column that I submitted to the Columbia Business Times. Now I want to expand this and add some graphs, images, youtube videos, so I can send out via my substack newsletter. Can you do that for me?”

I have pasted here verbatim the result from Claude, as well as the images it generated and the youtube videos it selected. I would love to know your comments about how Claude did with this task, and I provide my comments and notes about the results and what mistakes were initially made by Claude at the end of the post.

In December, I wrote about the AI Productivity Paradox--the difference between the gains businesses were promised from generative AI and the messy reality. But new research reveals a more subtle problem. Even when AI works well in an organization, it doesn’t necessarily make work lighter. It can make work more intense.

In a study published last month in the Harvard Business Review, researchers Aruna Ranganathan and Xingqi Maggie Ye from UC Berkeley spent eight months studying how generative AI changed work habits at a tech company with about 200 employees. The company didn’t mandate AI use but paid for “pro” subscriptions. The result? Nobody went home early. They just did more at a faster pace. As one engineer put it:

“You had thought that maybe because you could be more productive with AI, then you save some time, you can work less. But then really, you don’t work less. You just work the same amount or even more.”

This finding isn’t isolated. A 2024 Upwork study of 2,500 workers found that 77% of employees using AI said the tools had actually increased their workload. Nearly half said they didn’t know how to achieve the productivity gains their employers expected. And a separate trial found experienced developers using AI coding tools took 19% longer on tasks while believing they were 20% faster. The gap between how productive AI makes us feel and how productive it actually makes us is real--and growing.

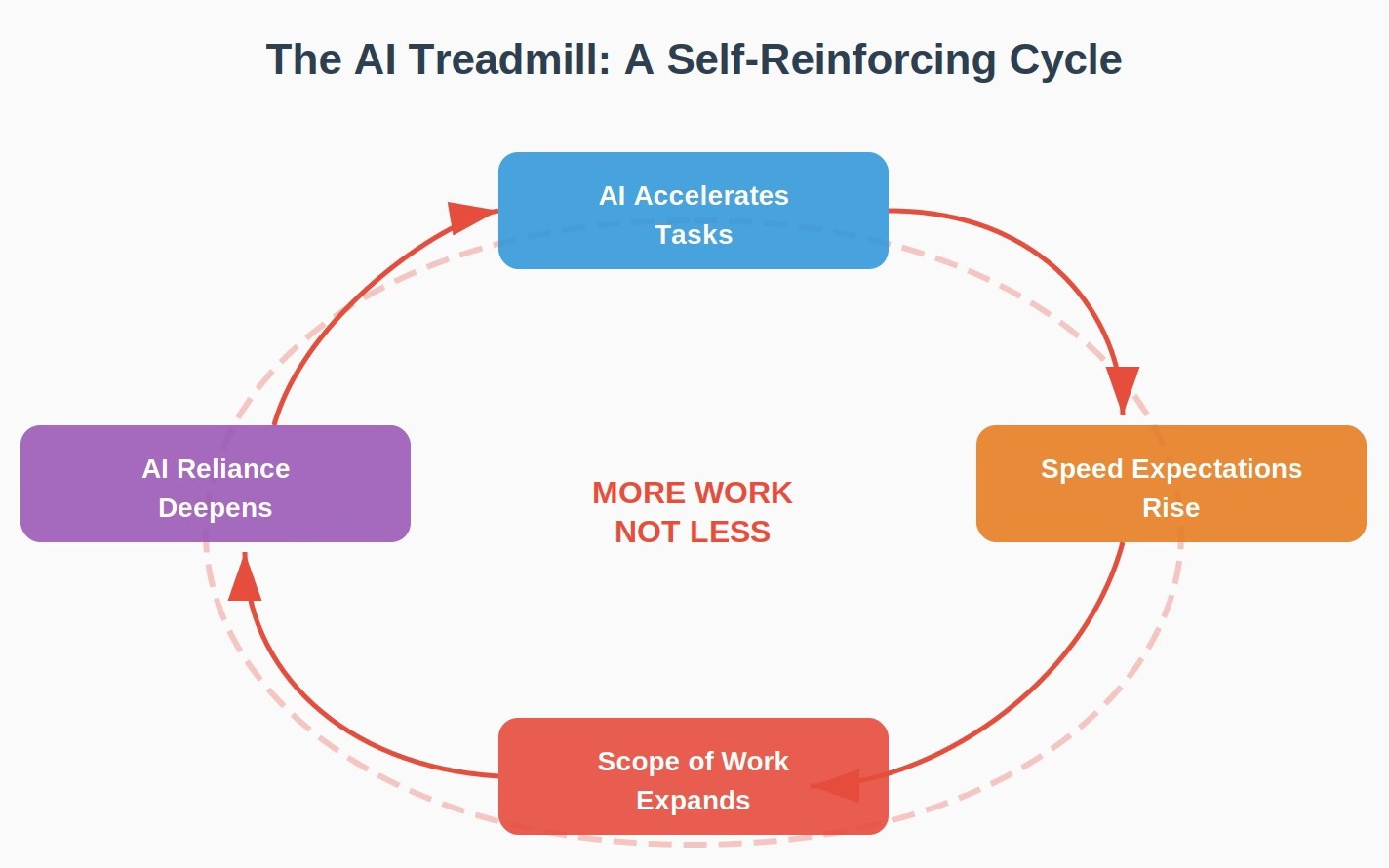

The Treadmill Effect

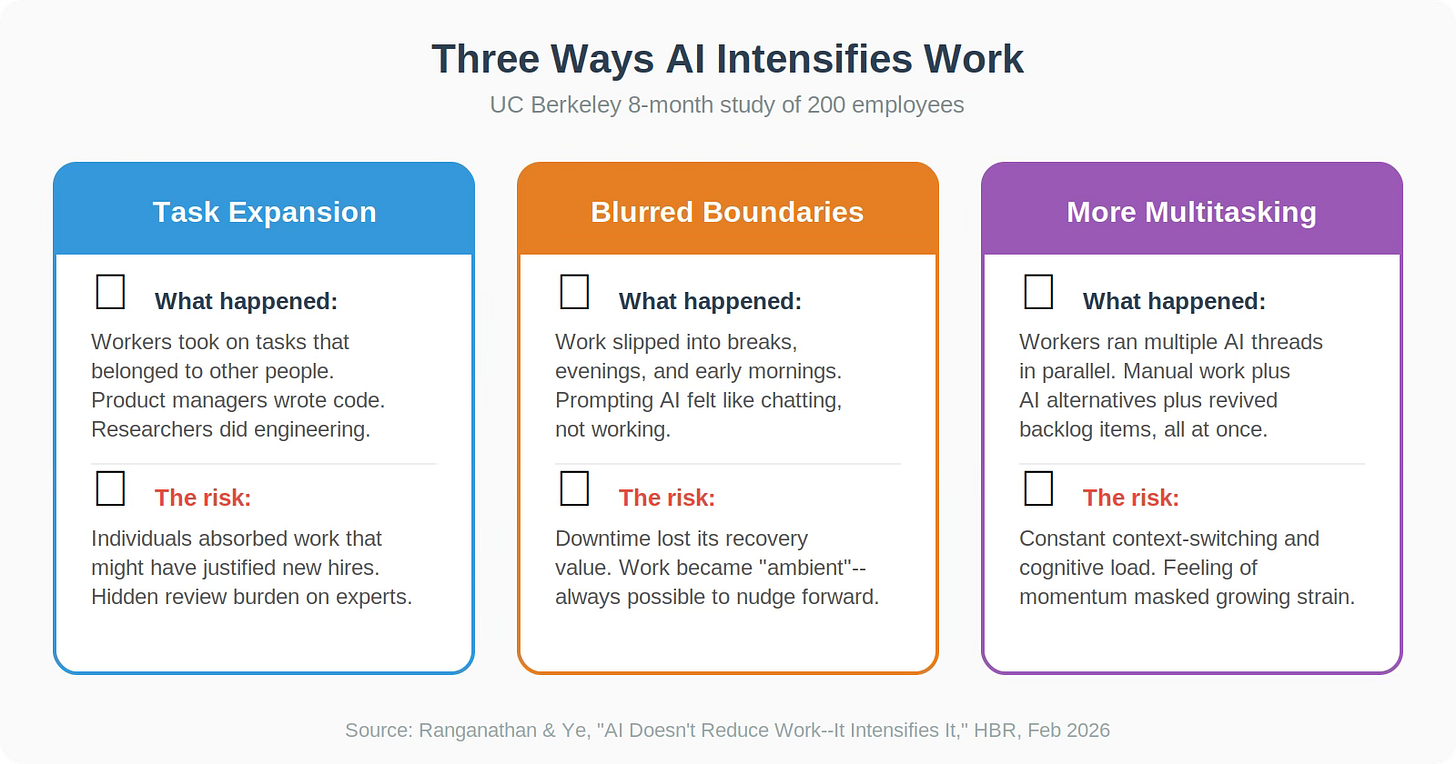

The Berkeley researchers identified three distinct patterns of intensification. Understanding them is the first step toward breaking the cycle.

1. Task Expansion

Because AI fills knowledge gaps, workers started doing things that used to belong to other people. Product managers wrote code (Yikes!). Researchers took on engineering tasks. People absorbed work that might previously have justified additional hires.

The researchers noted that AI gave workers a feeling of empowerment--they no longer needed to depend on others or defer tasks they felt unqualified for. But the knock-on effects were significant. Engineers, for example, spent increasing time reviewing and coaching colleagues who were “vibe-coding”--finishing partially complete AI-assisted work that showed up in Slack threads and desk-side conversations, adding to their workloads in ways that never appeared on anyone’s task list.

2. Blurred Boundaries

Because prompting AI feels more like chatting than working, people slipped tasks into lunch breaks, meetings, and evenings. Work became something that could be nudged forward with a simple chat message to your friendly AI coworker.

Workers described sending a “quick last prompt” right before leaving their desk so AI could work while they stepped away. Others prompted AI during meetings or while waiting for a file to load. None of these moments felt like overtime. But over time, they produced a workday with fewer natural pauses and a more continuous involvement with work. As the researchers put it, “the boundary between work and non-work did not disappear, but it became easier to cross.”

3. More Multitasking

Workers managed several AI-assisted threads at once, creating constant switching between a growing pile of open tasks. They ran multiple AI agents in parallel, revived long-deferred tasks because AI could “handle them” in the background, and juggled manual work alongside AI-generated alternatives.

The sense of having a “partner” felt like momentum. The reality was constant attention-switching, frequent checking of AI outputs, and cognitive load that accumulated silently throughout the day.

The Red Queen’s Race

These patterns created a self-reinforcing cycle where AI accelerated tasks, which in turn raised expectations for speed. Higher speed made workers more reliant on AI, which widened the scope of what tasks and projects were assisted by AI. This, of course, generated more work.

In Lewis Carroll’s Through the Looking-Glass, the Red Queen tells Alice: “Now, here, you see, it takes all the running you can do, to keep in the same place. If you want to get somewhere else, you must run at least twice as fast as that!”

For some workers, AI has become that Red Queen--constantly raising the baseline for what counts as productive. The treadmill keeps speeding up, but nobody feels like they can step off.

What makes this particularly difficult to manage is that the intensification is voluntary. Nobody told these employees to work during evenings or take on tasks outside their role. They did it because AI made it feel possible and rewarding. The researchers describe it as a cycle that’s “easy for leaders to overlook” precisely because the extra effort is framed as enjoyable experimentation rather than overwork.

I’m on the Treadmill Too

I certainly recognize this in myself. Having invested the time to get genuinely great results with AI, I haven’t used my AI skills to work less. I’ve used it to build new software, develop 3D model designs, and revive old projects I’d abandoned years ago because I didn’t have the programming skills to pull them off. That’s exciting--but it’s also more work, not less.

But there’s a big difference between an old slacker-hacker like me choosing to take on more side projects and an entire workplace where “doing more all the time” becomes the new normal.

The Case for Slow Thinking

The larger problem is that innovation requires slow thinking: talking through a problem with a colleague, sketching ideas on a whiteboard, letting your subconscious chew on it overnight.

Daniel Kahneman’s landmark book Thinking, Fast and Slow describes two cognitive systems: System 1 (fast, intuitive, automatic) and System 2 (slow, deliberate, analytical). Both are essential, but our best decisions--the ones that involve real judgment, creativity, and strategic thinking--come from System 2. That’s the slow one.

AI is a System 1 accelerant. It makes the fast stuff faster, which is genuinely useful for drafting, summarizing, and generating options. But the danger of heavy AI use is that it moves everything into the fast pile. When you can generate a draft in seconds, the temptation is to ship it and move on--skipping the slow thinking where better, more innovative solutions can emerge.

The Berkeley study found exactly this pattern. Workers described “just trying things” with AI, but these experiments accumulated into a meaningful widening of job scope--without the corresponding time for reflection, discussion, and deliberation that produces genuinely good work.

Some of the most important work in any organization happens before anyone touches a keyboard. The hallway conversation where a colleague challenges your assumption. The walk to get coffee where a solution materializes. The night of sleep that lets your subconscious connect dots your conscious mind missed. AI can’t replace any of that. But it can--and does--crowd it out.

A short overview of Kahneman’s System 1 and System 2 thinking. AI supercharges System 1--but System 2 is where the real breakthroughs happen.

The Numbers Tell the Story

The Berkeley study isn’t the only evidence that AI intensifies rather than reduces work. Here’s what we know so far:

The pattern is consistent: AI makes work feel more productive while often making it more draining. The gap between perception and reality is where the treadmill lives.

Getting Off the Treadmill

So what do we do? The Berkeley researchers suggest leaders build what they call an “AI practice”--basically, agreeing as an organization on how you’ll use AI and, just as importantly, when you’ll stop. Their specific recommendations:

Intentional pauses. Before a major decision is finalized, require one counterargument and one explicit link to organizational goals. This sounds simple, but it’s a powerful check against the drift that happens when AI-accelerated work outpaces deliberation.

Sequencing. Batch AI-generated outputs instead of reacting to each one the moment it pops up. Hold updates until natural breakpoints. Protect focus windows where workers aren’t expected to respond to every notification in real time.

Human grounding. Protect time for actual human conversation--the kind that pulls you out of the solo prompting loop. Short check-ins, shared reflection, even just walking to someone’s desk instead of sending another Slack message. These moments aren’t inefficiency. They’re where perspective comes from.

I’d add one more: take a hard look at what you’re rewarding. If the person who takes on the most tasks gets the most praise, you’re feeding the treadmill. If you reward judgment, quality, and knowing when to stop--you’re building something that lasts.

The Bottom Line

AI has already changed how we work. The question is whether you’ll shape that change on your terms--or let the treadmill set the pace.

The Red Queen keeps running. You don’t have to.

Further Reading:

AI Doesn’t Reduce Work--It Intensifies It (Harvard Business Review)

The Red Queen Effect (Farnam Street)

The First Signs of Burnout Are Coming from AI’s Biggest Fans (TechCrunch)

Thinking, Fast and Slow by Daniel Kahneman

AI-Enhanced Work Models (Upwork Research Institute, 2024) — 77% of employees say AI increased their workload

Measuring the Impact of Early-2025 AI on Experienced Open-Source Developer Productivity (METR, 2025) — Developers 19% slower with AI, believed 20% faster

Large Language Models, Small Labor Market Effects (Humlum & Vestergaard, NBER, 2025) — Just 3% time savings across thousands of workplaces

9 Trends Shaping Work in 2026 and Beyond (Gartner via HBR, 2026) — Only 1 in 50 AI investments deliver transformational value

The Health and Economic Burden of Employee Burnout to U.S. Employers (American Journal of Preventive Medicine, 2025) — Burnout costs $4,000–$21,000 per employee per year

My Review of Claude’s work

Overall, I would say that it did a very good job, a B+ or A-.

Here are the things I think Claude did well:

All the references and links check out and are correct.

Made the graphics with all the spelling correct (at least that I can tell 😂)

Figured out the author/origin of thinking fast, thinking slow without any direct reference to Kahneman.

Writing style is close to mine, but I would edit out the fluff and repeats.

It generally kept to my arguments.

Here are the things I don’t think Claude did well:

There were several other YouTube videos that it told me to include that were not currently available (old links, perhaps). It could not find alternatives and after some back and forth, Claude said that I didn’t need to include any more videos. (Note: Google’s Gemini would have probably done a better job at finding related videos).

It added the “The Numbers Tell the Story” part, but didn’t explain each of these. A couple of the studies seem to undermine my original argument or don’t necessarily relate directly to AI related changes in the work environment.

Another question we have to ask is how well I did in prompting Claude? Remember this was my original prompt:

“Here is a column that I submitted to the Columbia Business Times. Now I want to expand this and add some graphs, images, youtube videos, so I can send out via my substack newsletter. Can you do that for me?”

I could have made this a multi-step process of doing research with Claude as a co-worker, reading and selected additional research and topics, then exploring related youtube videos in Gemini and bringing those links back to Claude.

The real question is: is the work that Claude did good enough? I am on the fence on this one, I would say no if I just use a simple prompt, yes if I spent more time with it. However it is remarkable how far we have come, and with a couple of hours of work on my side, Claude could have produced a complete book chapter that would need little editing.

What do you think? Comment below!

My commentary may be republished online or in print under Creative Commons license CC BY-NC-ND 4.0. I ask that you edit only for style or to shorten, provide proper attribution and link to my contact information.

Book Me for Your Next Conference or Event

After nearly two decades teaching and building innovative programs at the University of Missouri, I’m now focusing more of my time on keynote speaking and workshops—helping organizations make sense of the emerging technologies reshaping work, learning, and leadership.

If your organization, conference, or leadership team is exploring how to navigate AI and emerging technologies responsibly—and productively—I’d love to talk.

For more details, topics, and booking information click here.

📥Recent Talks, News and Updates

I gave several talks recently (Chamber of Commerce, Red River Estate Planning Council, Association of Government Accountants, etc.), and I have compiled a master list of all the studies and articles that I cited or used in these talks, organized by topic. Check it out here: www.profc.io/ai-links

👍 Products I Recommend

Products a card game for workshop ideation and ice breakers (affiliate link). I use this in my workshops and classes regularly. Made by a former Mizzou student Aaron H.

📆 Upcoming Talks/Classes

I will be presenting “AI Externalities” at Law, Technology, and Society: Charting the Next Frontier symposium on the MU campus on April 15th. Details coming soon.

Americans don’t know what to do if their work hours contract. If Gen AI speeds productivity, we just do more. Gallop data show that America has high engagement workers compared to much of the world. For knowledge workers, where Gen AI is most useful, the achievement orientation and professional identity suggest that we will try to get a competitive advantage using AI. A recent paper in Nature showed that in the physical sciences, researchers using AI in their workflow publish 3x as many papers (not Gen AI: this goes back decades). It maybe part selection effect, but it may also reflect how AI speeds up research which is not used to work more leisurely. Instead, team sizes are lower and researchers become lab leaders faster. The knowledge economy is competitive, and we use efficiency gains to strive for success instead of taking our foot off the gas. I predict that this will be especially true for “makers” compared to “managers.” Gen AI speeds “making.” Creators, coders, writers, and researchers are all “makers” for whom Gen AI is a force multiplier. It would take a lot of discipline and intentionality to convert efficiency into lower workplace stress and better work-life balance.

Context switching is the big thing I’m trying to think through.

If people are expected to do 40 hrs of work in 8hrs, then you would have to keep track of 40 hrs of work in your head and try to maintain quality control for all that work. Seems like it would be much more stressful than just a regular 8 hrs of work.

The big thing I am hoping to see is salary increases as productivity increases and margins increase, but am doubtful everyone will see the rewards.